CNF:Machine Learning

This is the page for the CNF-Machine Learning project. It will be updated frequently with reports about the progress of the project.

The Problem

Traditional tracking algorithms are computationally intensive, especially for high luminosity experiments with multi-track final states where all combinations of segments in drift chambers have to be considered for firing best track candidates. At high luminosity the number of random segments (unrelated to the tracks) are increasing and as a result the number of possible combinations also increases, making the whole process longer.

The Goal

Using machine learning one can recognize the patterns that are valid in order to find the correct track faster. The model will be trained on real pre-labeled data and as an outcome, it will be able to label track combinations as valid or not.

The Data

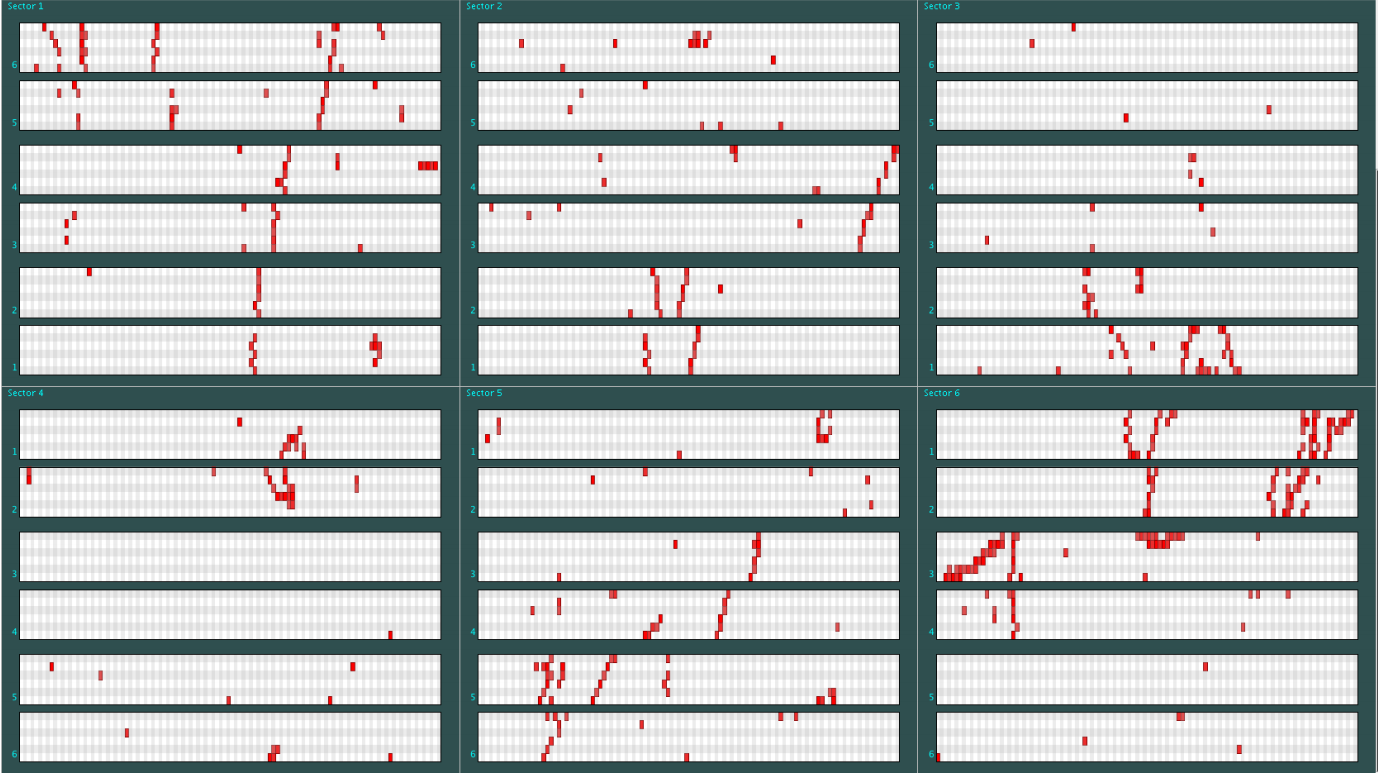

The drift chambers consist of 6 layers, of 6 wires, of 112 sensors each for a total of 4032 sensors (see picture on the right). The data provided let us know whether a sensor has detected a hit or not. Those detections might be part of the trajectory that we want to track or can be irrelevant (noise). The labeled data consist of all the possible combinations that form a track as rows (events) and the state of each sensor (detected something or not) as columns (features). The label provides information on whether a combination produces the valid track or not. An example of the input to be used by the model can be found in [1].

Timeframe

Reports

Week 1

Create the pipeline

- Load data in Spark Dataframes

- Split data in training/validation sets

- Experiment with different classification methods

- Tree-based

- SVM

- Train Model

- Validate

- Report accuracy and confusion matrix