PDR.PODM Distributed Memory

Contents

Issues

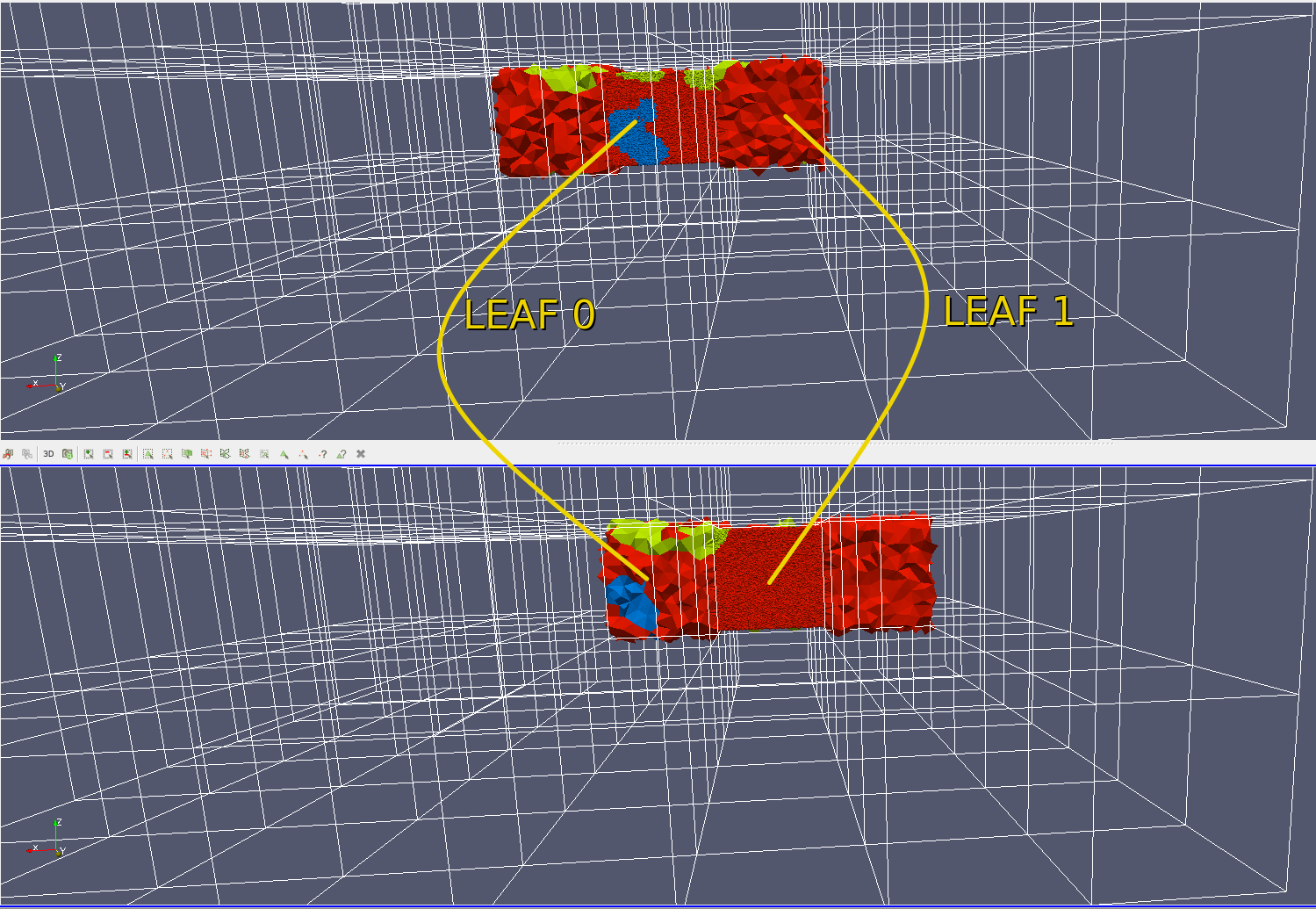

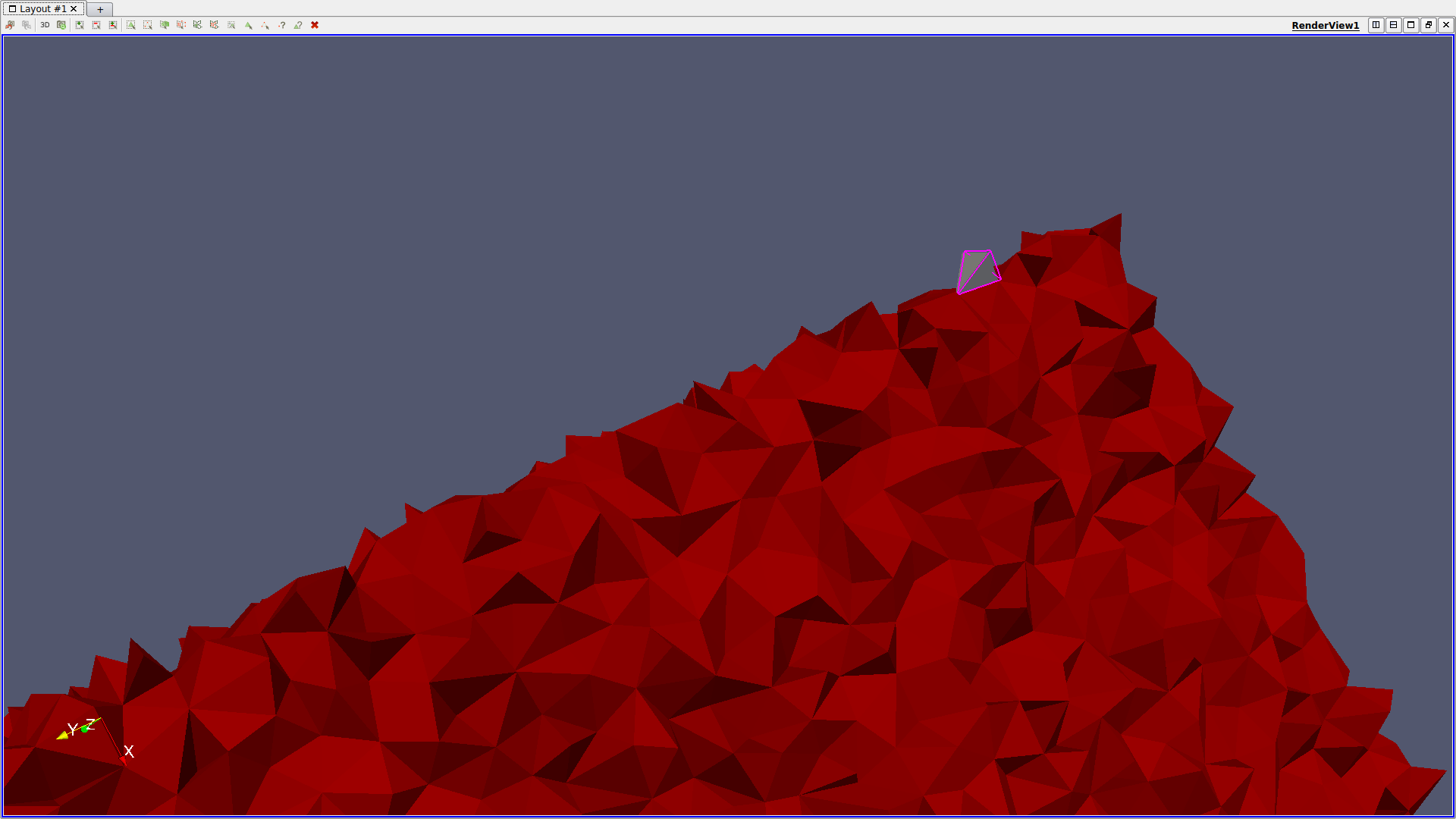

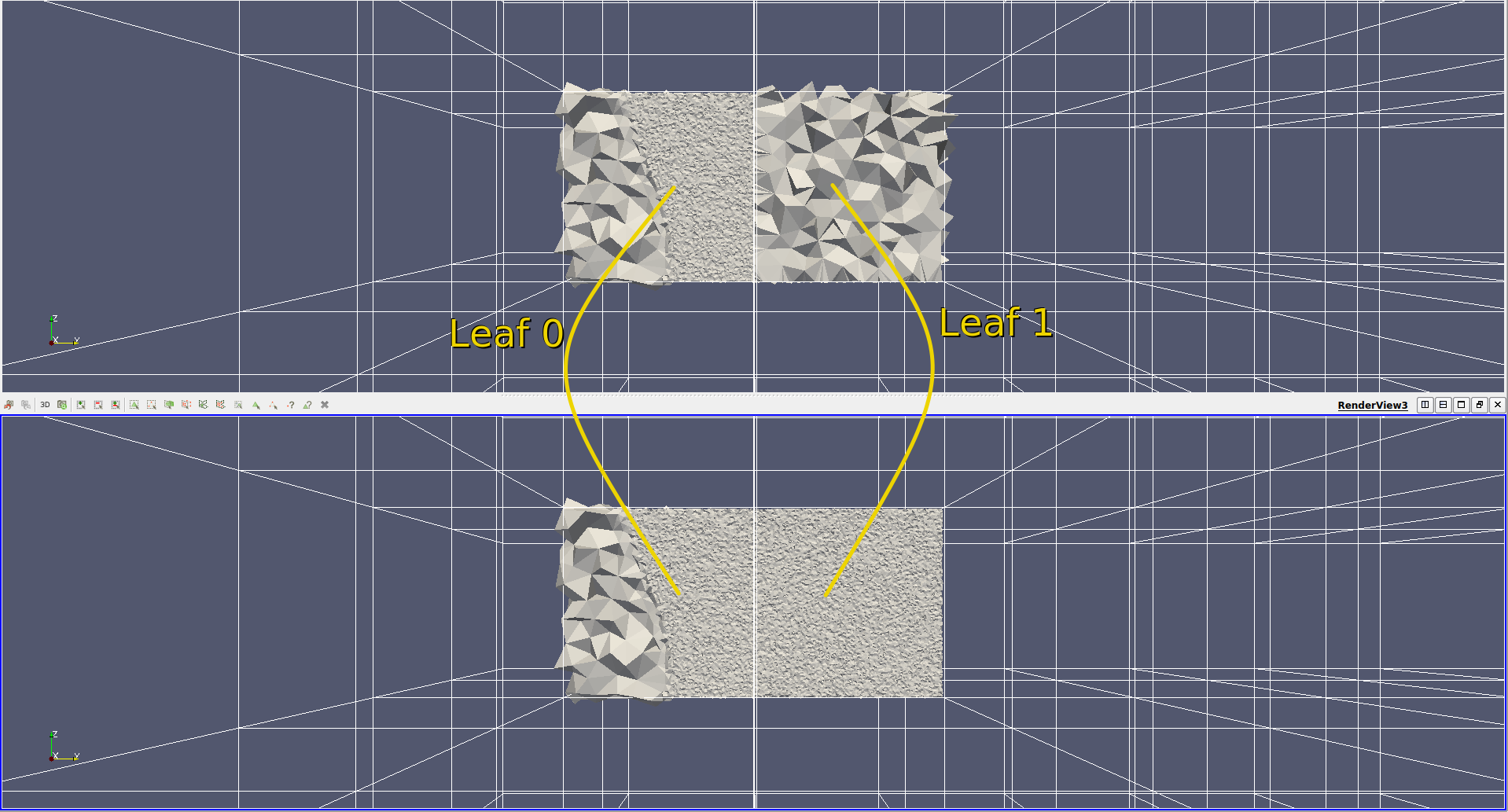

- No reuse of leaves refined by worker nodes. The picture below shows the issue. Two neighbour leaves (0,1) each refined as the main leaf (0 top, 1 bottom) but not refined as a neighor.

- Current algorithm uses neighbour traversal to distribute cells to octree leaving some cells out in some cases. Such a case can happen when a cell is part of an octree leaf based on its circumcenter but

it does not have any neighbour in the same leaf.

- During unpacking the incident cell for each vertex is not set correctly. Specifically, in the case that the initial incident cell is not part of the working unit (Leaf + LVL.1 Neighbours) and thus is not local,

it is set to the infinite cell. This causes PODM to crash randomly for some cases.

- Another issue comes from the way global IDs are updated for each cell's neighbors' IDs. The code that updates the cell's connectivity using global IDs takes the neighbor's pointer, retrieves its global ID and

updates the neighborID field. However, when the neighbour is part of another work unit's leaf and is not local this pointer is NULL. In this case the neighborID field is wrongly reset to the infinite cell ID, which as result, deletes the connectivity information forever.

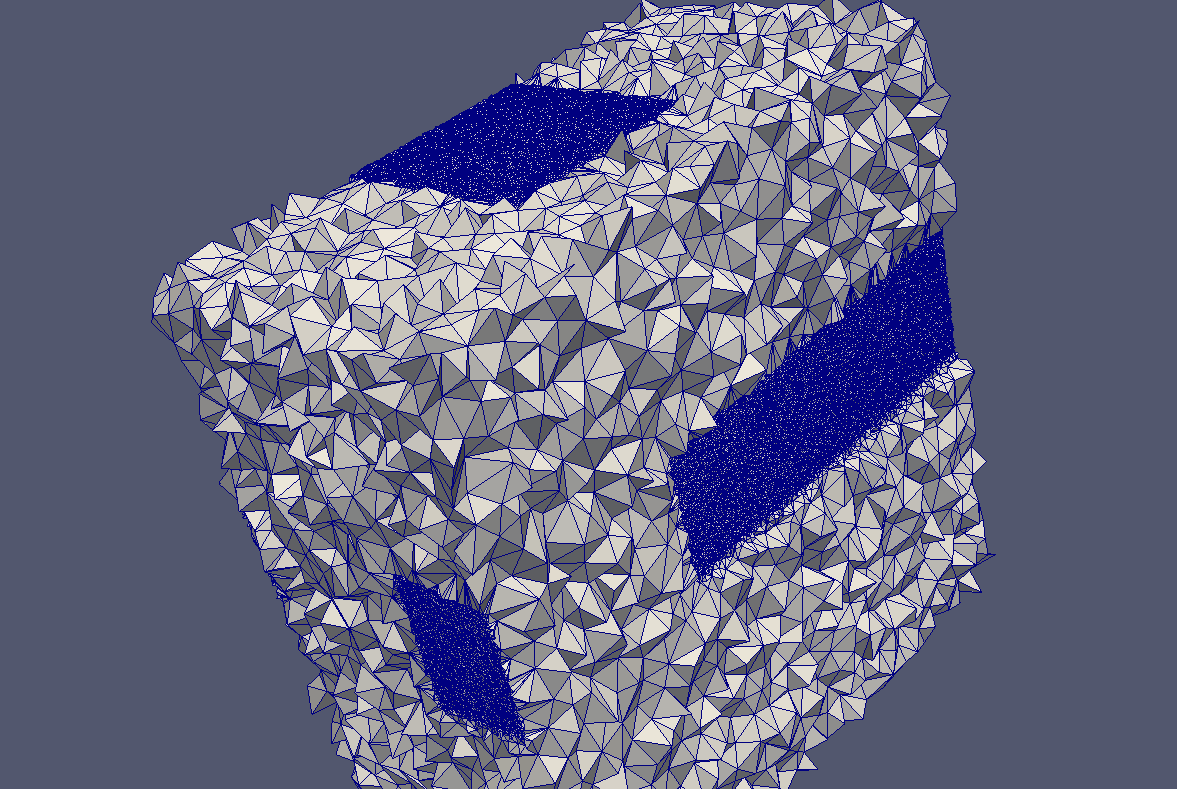

- The function that unpacks the required leaves before refinement does not discard duplicate vertices. Duplicate vertices will always be present since each leaf is packed and sent individually, and as a result,

neighbouring leaves will include the shared vertices. Because duplicate vertices are not handled, multiple vertex objects are created that are in fact the same point geometrically. Thus, two cells that share a common vertex could have pointers to two different vertex objects and, as a result, each cell views a different state about the same vertex.

Fixes